The Internet Needed HTTPS. AI Needs IAN.

AI is Taking Over. But Who Verifies It?

AI is no longer just answering questions. It is deciding truth. It determines what we read, what we invest in, who gets hired, and how policies are shaped.

But there is one problem no one is talking about.

AI is a black box. No transparency. No accountability. No way to verify its decisions.

A handful of corporations control intelligence itself.

And we are all just supposed to trust it.

The Knowledge Monopoly Problem

For most of human history, intelligence has been collective. Knowledge was not owned by a single entity. It was shared, debated, and refined. The internet expanded this idea, making information more open than ever. Wikipedia, open-source software, digitized archives.

But AI is reversing this trend.

A handful of corporations, OpenAI, Google, Anthropic, own the models, the data, and the outputs. They control what AI generates and who profits from it. Meanwhile, AI is being trained on our knowledge, our interactions, and our intellectual property, for free.

Companies are building trillion-dollar AI businesses on the backs of human intelligence, while the people contributing this knowledge receive nothing in return.

And what do we get in exchange?

An AI system we cannot trust.

AI hallucinates facts. Google Bard’s misinformation about an exoplanet discovery wiped out $100 billion in Alphabet’s market cap in a single day.

AI is biased. Responses shift based on language, region, and the company’s agenda. Ask the same question in different countries, and you will get different "truths."

AI is automating misinformation at scale. Deep-fakes, AI-generated false news, and misleading content are becoming indistinguishable from reality.

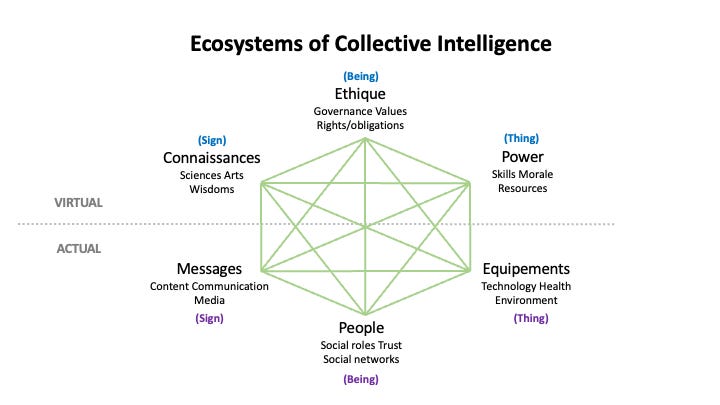

Pierre Lévy, the father of collective intelligence, wrote that "Intelligence is the trait of an ecosystem in relation with other ecosystems; it is collective by nature."

But AI today is violating this principle. Instead of an open knowledge network, AI is privatizing intelligence. Instead of enhancing collective intelligence, AI is dictating knowledge from the top down.

If we do not fix it now, we will wake up in a world where AI dictates reality, and no one can challenge it.

The First AI Trust Layer

IAN ensures that AI-generated knowledge is verifiable, auditable, and resistant to manipulation.

Instead of relying on a single, centralized AI model, IAN:

Aggregates multiple AI outputs to cross-verify accuracy.

Ranks responses by trust using a decentralized scoring mechanism.

Records verified knowledge on-chain for full transparency and compliance.

IAN turns AI from a black box into a provable system of truth.

But AI verification requires more than just better models. It requires decentralization.

Just like the internet needed HTTPS to protect information, AI needs a trust layer to protect truth itself.

Pierre Lévy states that "shared memory supports each phase of the cycle, helping to maintain the coordination, coherence, and identity of collective intelligence."

IAN is building that for AI. Every AI response is recorded in an auditable, on-chain memory system, ensuring that intelligence is not just generated, but provable and owned by those who contribute to it.

For the first time, AI is not just generating answers. It is proving them.

The $IAN Token: Aligning AI Incentives with Network Growth

Knowledge has always been collective. But until now, it had no economy.

The internet democratized access to information, but it did not fix the incentives behind intelligence creation. AI companies extract intelligence from the public for free while profiting billions.

IAN flips that model. Instead of intelligence being dictated from the top down, IAN rewards users for contributing to AI verification.

The $IAN token powers AI trust, creating an incentive system where:

Users access AI interactions based on token holdings. When you hold $IAN, you receive free access to IAN’s network of AI models. It is valuable, and it is secured by the network.

Validators earn $IAN tokens for verifying AI-generated knowledge. Instead of blindly trusting AI, the network ensures knowledge is proven, not dictated.

Enterprises stake $IAN to integrate verifiable AI intelligence. As AI adoption grows, the demand for trusted AI scales exponentially.

Lévy describes how intelligence relies on shared memory and a self-organizing cycle. But today’s AI models operate on privatized, centralized memory, controlled by corporations, not communities.

IAN fixes this.

IAN is turning AI’s fragmented, privatized intelligence into an open, verifiable system.

Every AI interaction, every validation, every transaction is recorded on-chain. Trust is no longer just a promise. It is provable.

AI is the Future. But It Has to Be Trustworthy.

AI is already shaping the world. But the world cannot run on blind trust.

The internet needed HTTPS. AI needs IAN.

Spotify and YouTube reshaped media by giving creators ownership of their work. IAN ensures that AI contributors own and monetize their intelligence.

If we do not fix this now, we will wake up in a world where AI dictates reality, and no one can challenge it.

AI is inevitable.

But so is AI trust.

IAN is making it happen.